Virtual reality is commonly a disembodying and physically removed experience. How can we instead use VR to augment a person’s senses, and enable an experience that accentuates the relationship of participants bodies with each other and with their environment?

This was the starting point for Sand VR — a collaborative project between Anna Henson, multimedia artist and Masters student studying Computational Design at the School of Architecture at Carnegie Mellon University, and myself.

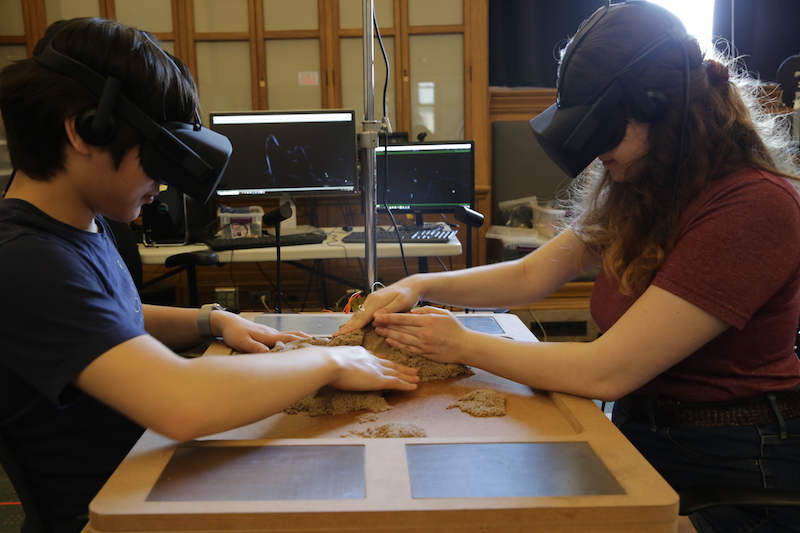

The way we chose to solve this problem was to make an installation piece that involved two participants in a shared VR space, where they would participate in a sensory activity. Because a sense of embodiment was one of our goals, we wanted to forgo the use of traditional controllers and instead use a more tangible material as our interface. Typically, embodiment in social VR experiences is achieved through the use of virtual avatars — as it is the case in Facebook Spaces or VRChat — but we wanted to utilize our close table space to use hands and actual touch to promote a connection between players and their environment, to bring some mixed-reality elements into the virtual space. Lastly, we wanted to create a more intimate experience by choosing an experience that would encourage collaboration and communication between the players.

We looked into a number of sources of references in the tangible interface space for our inspirations — including work at the Tangible Media Lab in MIT, and interaction demos by Leap Motion — but our biggest source of inspiration came from the art world, from a Brazilian artist named Lygia Clark. Clark designed situations for people to engage directly and tangibly in her artworks. Through interactive pieces such as Air and Stone and Abyss Mask, Clark uses physical material together with participants’ bodies, to explore of the human senses as augmented by moving parts. This concept of individual objects relating to each other to create a moving system that is more than the sum of its parts, was something that we wanted to convey in our virtual experience as well.

The first few weeks of the project was spent by exploring various tangible mediums — namely childrens’ toys — and experimenting with how we could create experiences around it. Kinetic Sand was one medium that yielded great results — it was tactile and fun to play around with, and the simultaneous moldability and flexibility of the material inspired many potential activities that we could have our players do. Also, it was important that the material was both dry, clay-like, and non-messy — there was an immediate recognition and comfort from our playtesters, when touching the material for the first time in a blindfolded scenario.

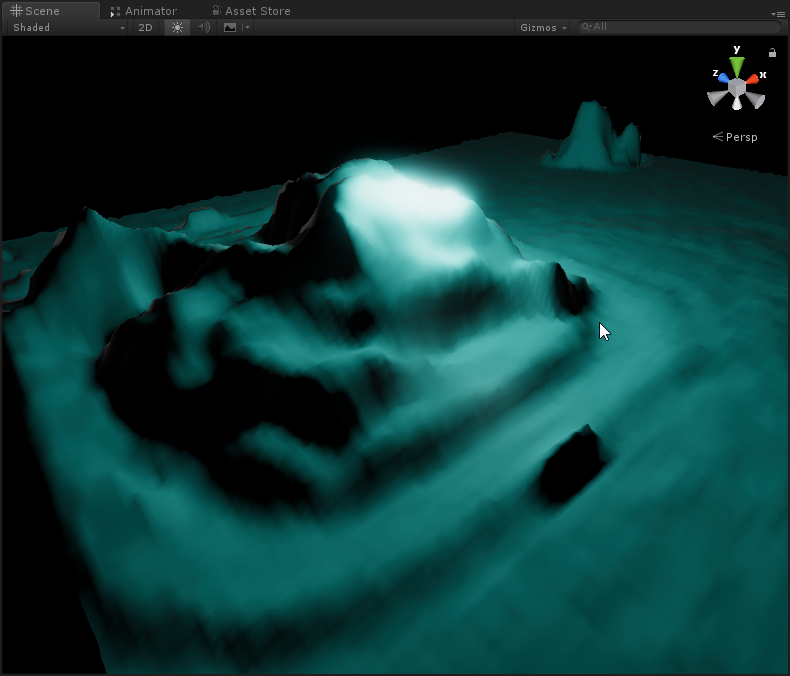

Once we settled on Kinetic Sand, we then represented the material in VR: the sand would be depth-tracked using a Kinect and then reconstructed as a 3D mesh inside the virtual world, and players would rest their non-active hand on a smooth metal plate that we constructed. During playtests, we found that even accidental touches between players was a special moment that we wanted to highlight in the virtual world. We used the Makey Makey to detect these touches, in which a light would glow inside of our virtual space, subtly reinforcing these moments of physical contact, and revealing the sand sculpture that was molded by the participants.

For our physical setup, used a custom wooden tray crafted by Anna to contain the kinetic sand. Mounted on the sides of the tray were two metal pads to detect touches, and atop the tray facing down was a Kinect to track the sand for depth reconstruction.

In the virtual experience, we have little balls (nicknamed “Guppies”) that are physically affected by the sand terrain. Participants can move the guppies around by cupping them in their hands, or by making rivers and pathways with the sand.

In the technical implementation, we faced a few challenges. Firstly, the Kinect depth data was highly jittery and noisy, making depth reconstruction and physics simulation unstable in its unfiltered form. After some experimentation, we found that using an average of the last 15 depth samples yielded a good balance between a smooth surface and responsive feel.

The next challenge was networking: The Kinect 2 captures depth data at a resolution of 512 by 424 pixels, at an average framerate of 30fps. In order to sync the two players’ sand meshes together, we had to send this data from one computer to the other constantly, resulting in a data transfer of 12 MB/s. We were able to achieve this data rate by separating the data into chunks of 4 KB each, and then sending the chunks through UDP. The receiving end would then reconstruct the depth image from the received chunks, and then upload the resulting buffer into a GPU compute buffer to render the mesh. On the hardware side, this was possible by connecting the two computers physically using a gigabit router and ethernet cables.

Overall, this project was a success. We were able to bridge the gap between Virtual and Mixed Reality, and explore tangible interaction space using a unique medium. The technical challenges of the project underscored the hardware and software requirements that were necessary to provide a high-fidelity, low-latency experience, as well as the inter-departmental collaboration and design thinking that was required to make the experience a compelling one.